|

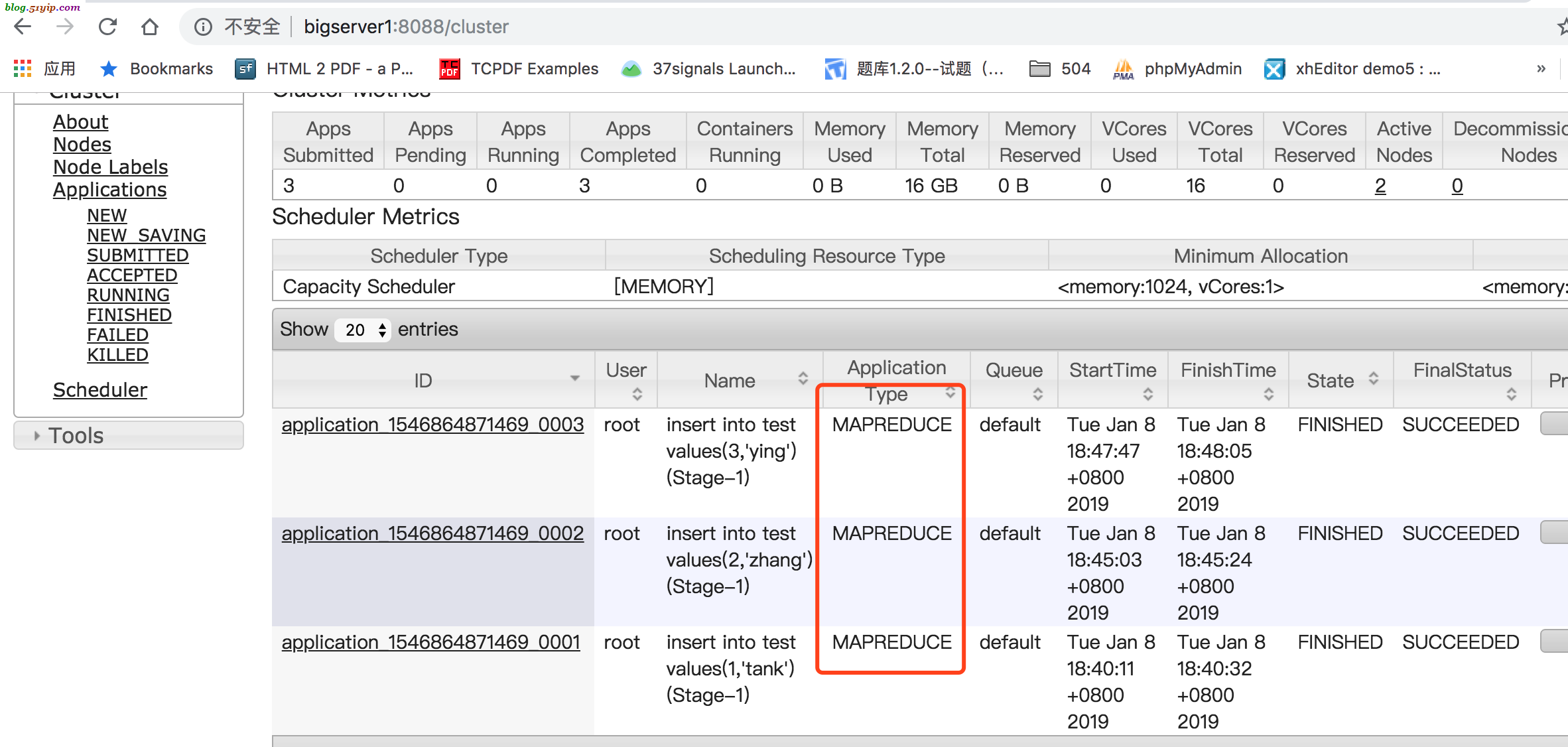

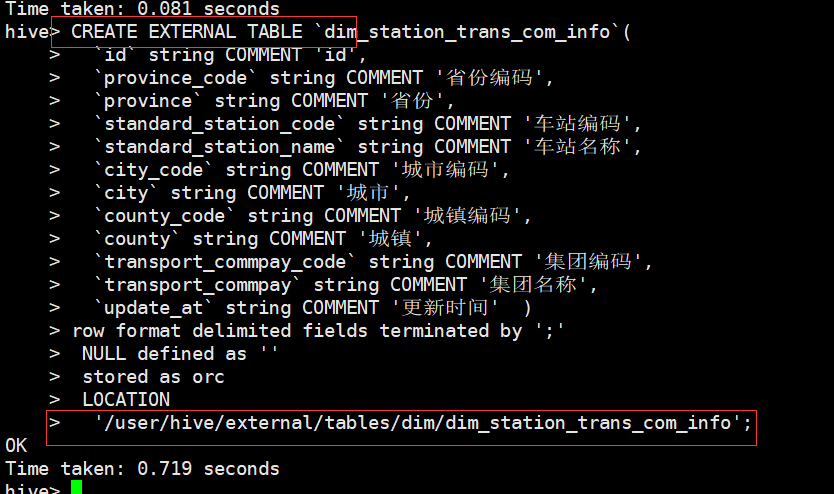

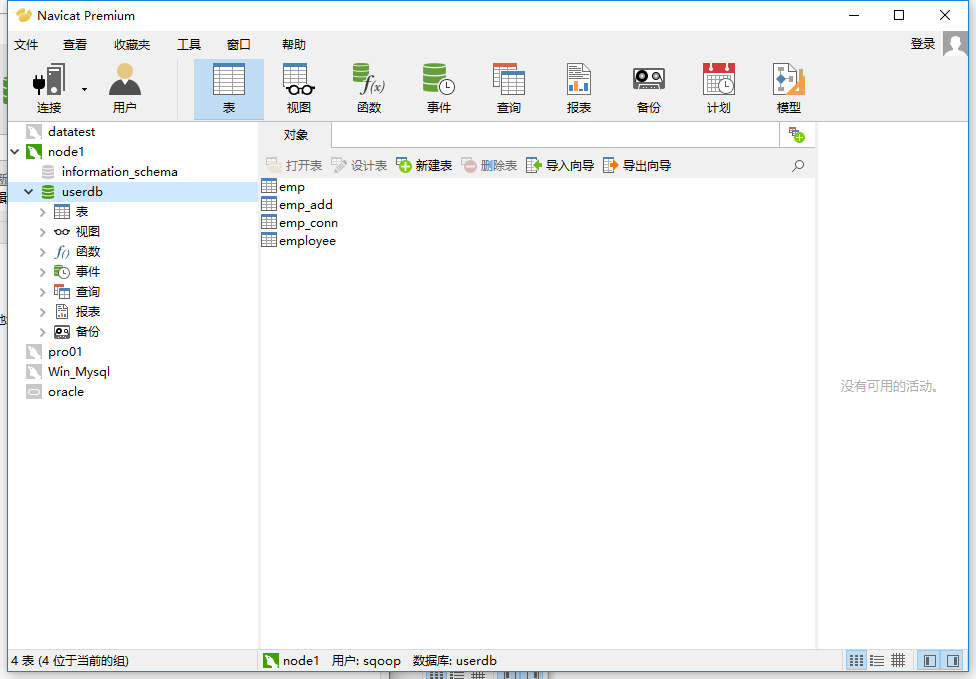

use Test Ĭreate table customers(customerid varchar(50), name varchar(50), email varchar(50), phoneno varchar(20),city varchar(50),state varchar(50)) So login to MySQL command prompt and type the below MySQL queries. Now go to hive shell and type commands shown in below screenshot.įor this example we will add another table named as customersin our existing database Test. Once loading of data completed sqoop will delete the target directory. Here one thing to notice is that, sqoop is loading data from target directory to sqoop_dbs.employees table. Sqoop import .allow_text_splitter=true -connect jdbc:mysql://localhost:3306/Test?zeroDateTimeBehavior=round -username root -P -split-by empid -table employee -columns "empid,name" -target-dir /user/hive/sqoop_mysql_hive/ -fields-terminated-by "," -hive-import -create-hive-table -hive-table sqoop_dbs.employees Using import tool to get data from mysql If the table exists sqoop throws an error.įor this example i have created one database in hive named as sqoop_dbs which is located in /usr/hive/warehouse. create – hive – table is used to create a hive table.

fields – terminated – by “,” is used to store the rows in a comma separated values. –hive-import is used to import table to hive. Best way is to get the password from a secure password-file stored in hdfs or local file system. You can also specify –password argument and followed by password instead of prompting to user as we did it in earlier examples. P will prompt the user to enter the password in terminal. Importing data to HDFS Synatx to connect to mysql and import : sqoop import -connect jdbc:mysql:// Create a table in MySQL create database Test Ĭreate table employee(empid varchar(50), name varchar(50), age varchar(2), salary float(10)) desc employee

Prerequisites : Assuming you have a Hadoop Environment with hive and sqoop installed. This article only focuses on how to import data from MySQL table to HDFS & Hive.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed